Unlocking the AI Value of Your Most Sensitive Data

Unlocking the AI Value of Your Most Sensitive Data

How OPAQUE and AMD Make Trust Verifiable with Confidential AI

The Enterprise AI Challenge

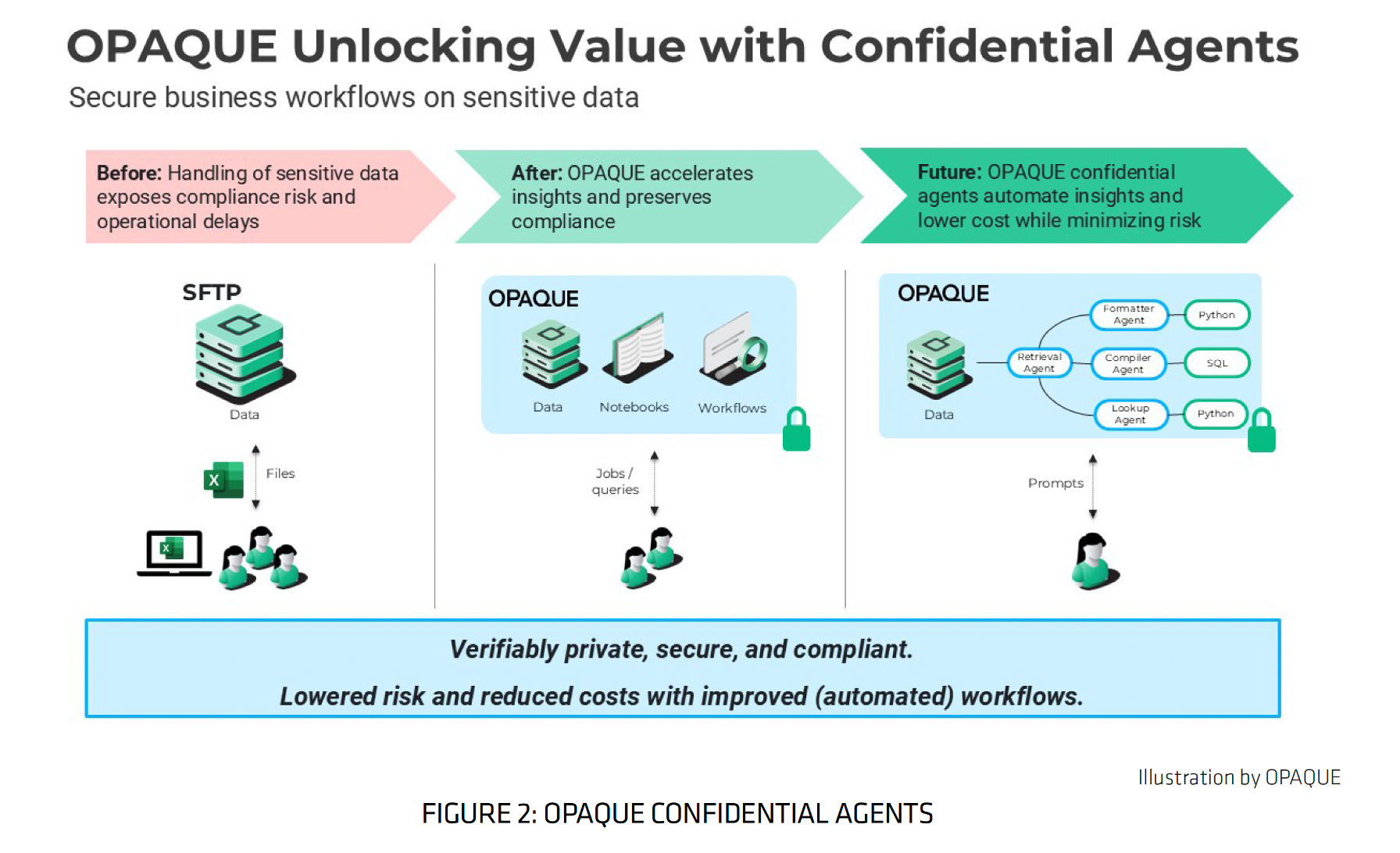

As AI adoption accelerates and AI systems become more powerful, enterprises face mounting pressure to harness sensitive, regulated, and proprietary data for innovation and competitive advantage, while navigating strict regulations, preventing breaches, and maintaining trust. Yet most enterprises hit a massive roadblock: the privacy-utility tradeoff. Their most valuable data—customer records, financial information, healthcare data—remains largely off limits to AI workflows due to security and compliance concerns. This key challenge continues to hold enterprises back from operationalizing AI: how to use sensitive data without exposing it.

The Privacy–Utility Tradeoff Holding Back Enterprise AI

You have the proprietary data needed to build world-class AI agents, but moving that data into the cloud or shared environments often means "de-risking" it through anonymization or masking—techniques that frequently degrade data quality and reduce model accuracy. Traditional encryption approaches protect data at rest and in transit, but leave it vulnerable to exposure during AI processing since data must often be decrypted to be used. This security gap has become one of the biggest sources of risk in modern AI systems, especially as organizations operate across cloud environments, partners, and jurisdictions, and it has kept enterprises from realizing AI's full potential. Until now.

The Solution: AMD SEV and OPAQUE Confidential AI

OPAQUE and AMD address this challenge head-on in a new joint white paper, From Risk to Resilience: Confidential Computing with AMD and OPAQUE. It explores how confidential computing and Confidential AI close this gap by protecting data in use by running workloads inside hardware-based Trusted Execution Environments (TEEs). These environments ensure that data and code remain isolated — even from cloud operators, system administrators, or compromised infrastructure. For security, risk, and data leaders, this is the missing third pillar of data protection that makes AI with sensitive data viable at scale — and why it’s quickly becoming essential infrastructure for enterprise AI.

This collaboration demonstrates how combining AMD's hardware-backed security with OPAQUE's verifiable runtime governance is enabling enterprises to finally unlock their most sensitive data for AI innovation. It highlights how AMD Secure Encrypted Virtualization (SEV) technology, featured in AMD EPYC™ Series of data center CPUs, creates a strong foundation for confidential computing and powers virtual machines (VMs) that protects data while it is being processed. At the silicon layer, AMD SEV delivers hardware-enforced memory encryption, cryptographic attestation of the execution environment, integrity protection against tampering, and more, with broad ecosystem support and no application code changes required. This allows enterprises to run sensitive workloads in the cloud with significantly reduced trust assumptions.

But hardware alone isn’t enough to operationalize AI securely.

OPAQUE Confidential AI: Verifiable Governance on Hardware-Backed Trust

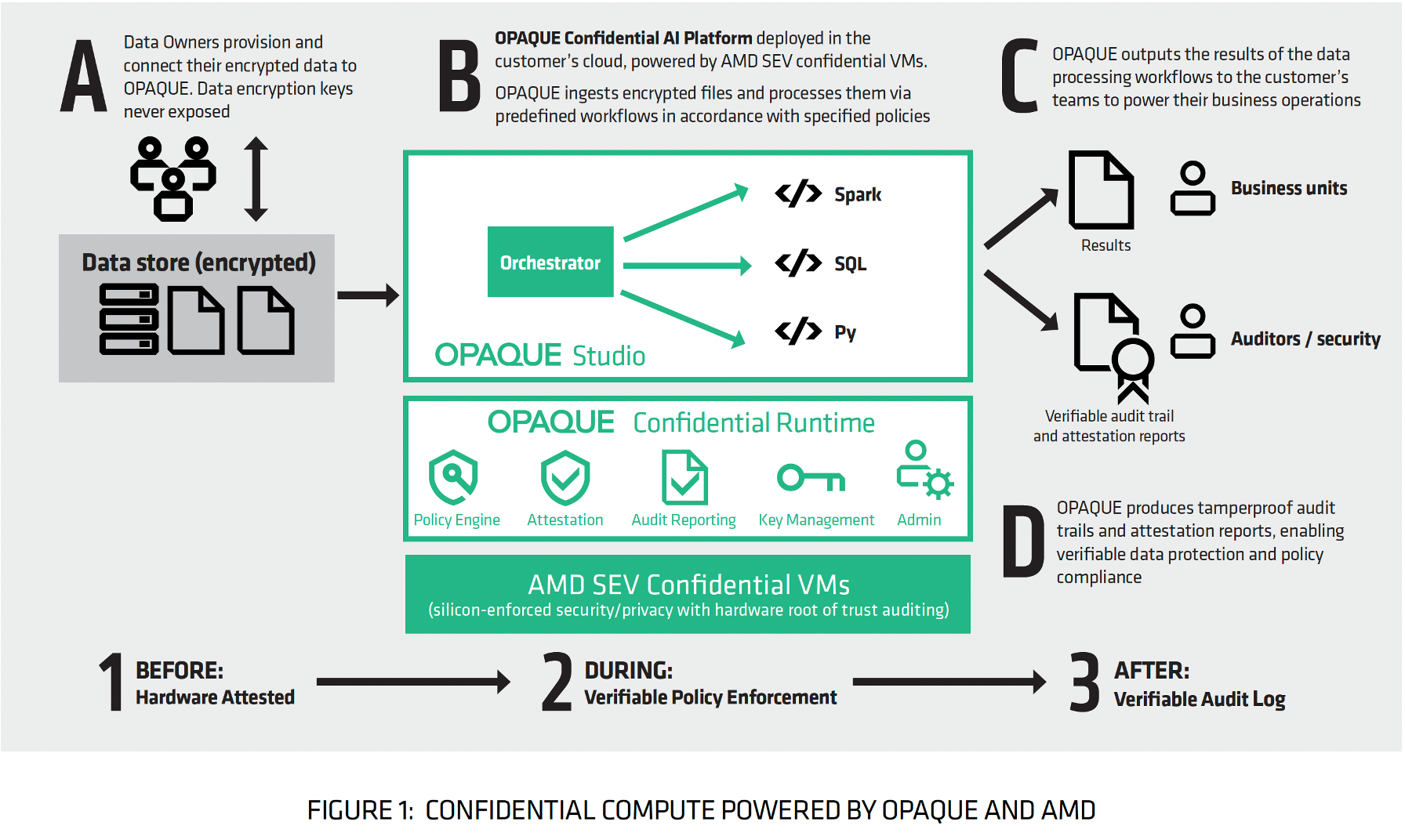

OPAQUE builds on the AMD SEV technology foundation, delivering software-level verifiable runtime governance, remote attestation, policy enforcement, and cryptographic audit logs on top of hardware-backed trust. With the OPAQUE Confidential AI Platform, enterprises get an end‑to‑end solution for AI that ensures every AI workflow, agent, and model processes data securely, with cryptographic proof that data and model weights remain private and that policies are enforced before, during, and after runtime:

- BEFORE: Attest – Remote attestation cryptographically verifies that AI workloads run on genuine AMD SEV confidential VMs with expected code before any sensitive data is processed.

- DURING: Enforce – Verifiable runtime policy enforcement ensures data remains encrypted throughout its lifecycle, including during AI execution, with cryptographic proof that access controls and data-use policies are enforced at runtime. If any cryptographic measurement fails or a violation is identified, the system automatically blocks the workload.

- AFTER: Audit – Exportable, tamper‑proof audit logs and attestation reports provide cryptographic proof of how data was processed, and which policies were enforced, giving auditors and regulators concrete evidence of compliance and data protection.

The result? Enterprises can finally unlock proprietary and regulated data to power more accurate AI agents and workflows, with every computation verifiable, every access governed by policy, and full auditability, transforming Confidential AI into a trust layer for enterprise AI systems.

A Real‑world Case Study: Securing Consumer Financial Data

A real-world case study in the paper brings this to life, highlighting a deployment with a large U.S. credit management company that previously struggled with manual, insecure processing of sensitive consumer debt files shared across hundreds of debt settlement partners. Their challenge was clear: manual workflows were slow and inefficient, sensitive data was decrypted for analysis, and enforcing data policies across hundreds of partners was nearly impossible. By switching to OPAQUE on AMD confidential VMs, the organization replaced manual, high-risk workflows with automated, encrypted pipelines — while maintaining full auditability and policy control. They completely transformed how they handle sensitive consumer PII. Remote attestation ensures only trusted AMD SEV confidential VMs can accept data, automated workflows execute only approved queries on encrypted files, and tamper‑proof audit reports provide regulators with cryptographic proof of how data was used and which policies were enforced.

The result? Dramatically reduced risk, faster operations, and scalability for growing data volumes:

- Automated, encrypted pipelines replaced manual, high-risk workflows.

- Workflows scaled to support hundreds of partners without sacrificing security.

- Cryptographic proof of policy enforcement and data deletion

- Tamper-proof audit trails provide regulators with concrete proof of compliance.

- Enhanced security posture kept PII encrypted end-to-end, even during use.

As AMD Corporate VP Madhusudhan Rangarajan notes: "Confidential AI is about turning sensitive data into advantage. AMD is thrilled to power partners like OPAQUE who help customers do that securely and at scale."

Confidential AI: From Compliance Checkbox to Innovation Foundation

As AI systems become more autonomous and more deeply embedded in business operations, trust becomes the limiting factor. Enterprises need to prove not just what their AI does, how it does it, where it runs, and what data it can access, but also which policies are enforced, and how outcomes are produced. OPAQUE and AMD believe Confidential AI is the foundation that makes this possible, transforming AI security from a compliance checkbox into a driver of innovation.

As OPAQUE Co-Founder and CTO Rishabh Poddar explains: "Confidential AI allows enterprises to unlock the full value of their most sensitive data without ever exposing it. By combining AMD hardware-backed trust with OPAQUE's software-level enforcement, organizations can now run AI workloads securely on encrypted data at cloud scale. Every computation is cryptographically verifiable, every access governed by policy, and every outcome auditable. This turns data privacy from a compliance checkbox into a foundation for innovation."

If you’re building AI on sensitive, regulated, or proprietary data, this white paper offers a practical blueprint for moving from risk to resilience. Don't let data privacy be the bottleneck that stalls your AI initiatives.

Read it to discover how combining AMD's proven SEV technology with OPAQUE's verifiable Confidential AI Platform unlocks your sensitive data for AI innovation, enforces policy at runtime, and scale securely in the cloud.

Ready to unlock your sensitive data for AI innovation?

👉 Download the white paper: "From Risk to Resilience: Confidential Computing with AMD and OPAQUE"

Contact our team to learn how OPAQUE's Confidential AI Platform unblocks AI value by making trust verifiable.